With everything going on, it’s time I had a real conversation with myself. A personal audit of some early failures and critical mistakes, if you will.

I have made so many mistakes. I’m a solo operator with my side projects. Learning new tools and new ways of thinking is hard. Things go wrong. There’s an extreme learning curve with all this crazy new technology shit, and there’s so much I still have to learn. But doing and experimenting in new ways is how you get better, and mistakes happen. So let’s talk about it, so maybe someone else can avoid my early pitfalls.

It surely isn’t always sunshine and rainbows, like everyone seems to be selling with their bullshit openclaw agentic Twitter bots right now. It’s exhausting.

So, there’s this version of the AI + SEO conversation that’s all about speed. How fast you can produce content, how many pages you can generate, and how quickly you can scale a site from zero to thousands of URLs. i get it, it’s exciting, new, it allows the handcuffs to come off. I’m guilty of this excitement myself. I’ve made mistakes trying to scale too fast multiple times on my early projects. It’s natural, I think.

Here’s the thing. The real problem with AI at scale isn’t velocity. It’s that the output looks correct when it isn’t. Language models are trained to produce plausible text. Plausible and accurate are not the same thing, and on reference sites, the gap between the two has real consequences if not done right. Sometimes, you layer shit code, you double down on a broken system, and you get caught in the “how am I ever going to financially recover from this” mode 5 months from now when you can’t edit your website because of the mistakes at the start.

Over the past two months I built a few programmatic reference sites: CheckMyTap (water quality data for 1,000 US cities), GageRef (welding electrode specs and hydraulic fitting data), WireRef (NEC electrical code reference for all 50 states), and a couple more I’ll go public with once I’m ready. Each one required enough SEO expertise to know when the AI output was wrong. That knowledge is the only reason the sites are accurate today. None of them perfect, but all of them are significantly improved from the start of the project.

Here’s what catching the errors actually looked like. Ranked by severity 🫠:

- Fabricated source data published as fact. AI-generated contaminant values presented as real utility measurements on health-critical pages. Are you serious right now? I had no idea. I thought I was careful. Nope. I didn’t have my system in place to catch this. I didn’t realize AI was fabricating data at scale.

- Safety-critical specs sourced from secondary sites. Welding amperage values and hydraulic fitting mappings pulled from aggregators rather than the governing standards, wrong in ways that don’t fail obviously. I didn’t confirm the pulled sources were legitimate.

- Incomplete data presented as complete. Correct NEC values published without the derating step that determines the number that actually matters for most installations.

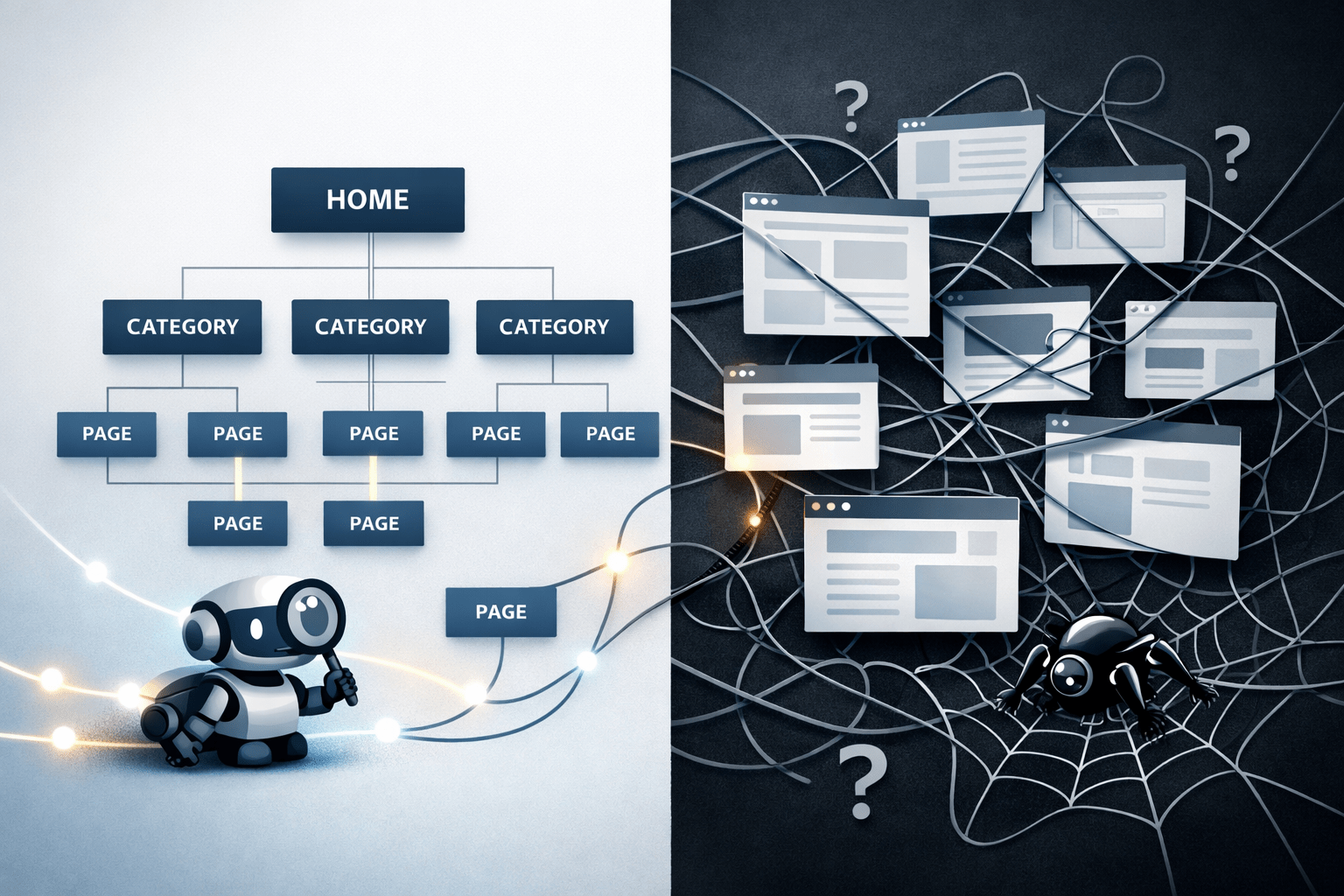

- Broken indexation signals at launch. Sitemap pointing to the wrong domain structure, canonical conflicts with the host’s URL handling. Should have caught this before launch but it slipped through the cracks somehow.

- Orphan pages and broken navigation shipped on day one. Pages with no inbound links and navigation referencing pages that didn’t exist yet, on every page, for every early visitor. I thought I made sure no thin content slipped, but I didn’t have specific “what is thin content” markdown skills to confirm. So when it checked, it “passed,” but that was not good enough.

- No validation layer in the build system. No preflight checks, no post-operation integrity checks, no output verification before deployment. When I jumped to other chats, I lose latest files, latest work, and it then builds on old work. This happened multiple times, confusing me.

- Broken search features shipped on launch. Twice. On-site search is the first thing a user tries when they can’t find something. Shipping it broken is not a minor UX issue. It’s a trust signal, and it failed on two separate launches before I treated it as a required preflight check rather than something to verify “after things settle.”

- Mobile usability was not entirely verified before launch. Desktop looked fine. I didn’t check my mobile compatabgility close enough until after the fact. On sites where the primary use case is someone on a job site pulling up a spec, that’s not a minor oversight.

- No separation between data and templates. Early builds had values hardcoded into templates. When a data error surfaced, fixing it meant a rebuild. The right structure is a clean separation: data sources that can be corrected, updated, or extended independently of the templates that render them. At scale, everything that isn’t connected that way becomes a maintenance problem that compounds.

Unacceptable errors, truly baffling. Fixing these wasn’t easy. Each one set me back big. And, it was embarrassing. I’m glad I caught these early on.

The fix isn’t better prompts but a memory layer

One thing I do now that I didn’t do at the start: I write retrospectives after every project and feed them back into future sessions as structured context. What went wrong, what the root cause was, hard rules that came out of it, and things to watch for next time.

The mechanics matter here. These aren’t notes to myself. They’re structured markdown files loaded into project context before any new work starts, the same way a CLAUDE.md file works in agentic coding environments: persistent instructions that survive session resets and prevent the model from reverting to defaults. The goal is the same whether you’re building code or content at scale. You’re not prompting from zero each time. You’re prompting from an accumulated set of constraints that the system already knows to respect.

What I’ve learned is that the highest-leverage layer isn’t the prompt. It’s the project instructions: the rules about sourcing, the validation requirements, the things that must be true before any output ships. These should be defined before the first session starts, not discovered through failure. And they should be modular. A sourcing standard for YMYL content is a different rule set from a structural validation checklist, which is different from a content architecture spec. When they’re separate, they can be updated independently. When they’re combined into a single document that grows without discipline, they start competing for the model’s attention and some rules get missed.

The other pattern I’ve internalized: friction is a signal. When I find myself correcting the same type of mistake across sessions, that correction belongs in the persistent layer, not in the next prompt. The retrospective is the mechanism that captures it. The project instructions are where it lives permanently. What this means in practice is that the mistakes I made on the first site didn’t repeat on the second, and didn’t repeat on the third. Each build started with a richer constraint set. Each one was measurably cleaner.

The retrospectives for these three projects are what made this post possible. They’re also what made the fourth and fifth projects meaningfully better than the first three.

I’m still actively working on improving this part of the system. I have a lot to learn and a long way to go. This is priority one for me right now. But this is a start.

Part of my writing on content systems, architecture, and how pages connect. See the applied work →

Okay, now, let’s go through some of these, and I’ll tell you how i caught and fixed:

Round numbers are a fabrication signal

Language models produce plausible numbers. Real data sources produce precise ones. That gap is the tell, and it’s easy to miss when you’re moving fast. Didn’t know this 🙂

The first version of CheckMyTap had AI-generated contaminant estimates across 1,000 city pages like lead, PFAS, nitrate, chlorine, hardness, TDS. The values were geographically consistent. Soft-water cities showed low hardness. Southwest cities showed high. They felt right in the way that well-calibrated fabrications do. I truly didn’t know any better. Thought they were accurate. Double checked as well, just in the wrong way.

Real water quality data comes from lab instruments. It has decimal precision because that’s what the measurement actually produced. AI estimates produce round numbers because round numbers are what a language model reaches for when no specific source exists. When I cross-referenced a single city against its actual Consumer Confidence Report, four of six values were materially wrong. Lead overstated by nearly 3x. Nitrate overstated by 14x. The site had been live for 11 days at that point.

The SEO frame here isn’t just accuracy. It’s survivability. YMYL content with no traceable primary source fails Google’s quality evaluation regardless of how clean the architecture is. Data provenance is an SEO requirement before it’s an ethical one. The two are not separate concerns.

Fixing it meant going back to primary sources: downloading CSVs directly from EPA SDWIS and UCMR5, cross-referencing against EWG’s tap water database, and running values against multiple sources to catch discrepancies before they went back live. I used other AI tools as part of the cross-examination process, specifically to flag where values across sources diverged rather than to generate new ones. Every city page now cites its sources explicitly. The rule that came out of it: if you can’t name the primary source for every value before the page is built, you’re not ready to build the page.

That rule now lives in the project context files that load at the start of every new build. It doesn’t have to be remembered. It’s enforced before the first session starts.

Wrong within range is the hardest failure to catch

Obvious errors get reported and corrected. Errors that are plausible but wrong propagate indefinitely because nothing breaks visibly. Users don’t flag them, the site keeps ranking, and the information stays wrong.

On GageRef, the original welding electrode data had amperage values that were 10-15% high on specific rod diameters. Not wildly off. Within the range most welders operate in day to day. But not what AWS A5.1 and A5.18 actually specify, because the values had been sourced from secondary aggregator sites rather than the governing standards directly. The aggregators were wrong. The error was subtle enough that nothing about the page signaled a problem.

The catch wasn’t welding domain expertise. It was source audit, the same SEO lens that evaluates whether a page has a defensible information advantage. A reference site that can’t identify its primary source for each data point isn’t a reference. It’s a repackage of whatever the aggregators already published, with no new signal for search and no reason to be trusted over them.

Fixing it meant going to the standards directly: downloading and cross-referencing AWS A5.1, A5.18, and SAE J514, confirming values against multiple sources, and rebuilding the affected data. Multiple AI tools were used to cross-examine outputs against each other, which surfaces disagreement between sources faster than manual comparison alone. Every value on GageRef now cites its source standard explicitly. The methodology page names those standards because that attribution is what makes the site a reference rather than an aggregator — for users and for search systems evaluating whether the content has genuine information gain.

The constraint that came out of this also lives in the project files now: secondary sources can inform structure, never values on a reference page.

An incomplete reference is its own category of wrong

There’s a failure mode that isn’t fabrication and isn’t secondary sourcing. It’s presenting correct but incomplete data as if it’s the full answer. Super hard to catch because the values themselves check out.

WireRef launched with accurate NEC ampacity values from Table 310.16. Most reference sites stop there, and stopping there is the problem. The number that matters for an actual installation isn’t the table value. It’s the value after the full derating chain: temperature correction factor, bundling adjustment, and the 110.14(C) termination limit that caps usable ampacity at the 75°C column for most residential and commercial work regardless of what the conductor’s 90°C rating says. Publishing the 90°C value as the answer is technically sourced correctly from the NEC. It’s also the wrong number for most real installations.

The SEO problem is trust compression. When a user applies the answer and gets a different result in the field, the site loses the one thing that makes reference content rank over time: being the source people rely on. Incomplete reference that looks complete produces exactly that outcome, and it does it quietly.

The fix was structural and required starting over on the data layer. The original build had values tied directly to templates — ampacity numbers baked into the page generation logic rather than living in a separate, queryable source. That architecture worked until something needed to change, at which point changing one value meant touching the template, and touching the template meant risking the presentation of every other value on every page that used it. One correction could fragment the output in ways that weren’t immediately visible.

The rebuild separated the concerns cleanly. All NEC data was restructured into JSON files where each wire gauge entry carried its own complete record: base ampacity by temperature rating, the applicable correction factors, the bundling adjustment, and the 110.14(C) termination limit that determines the number an installer actually uses. Templates don’t make calculations or carry values. They read from the JSON, apply the display logic, and surface the chain in sequence with the governing code section named at each step. When a data point needs to change now, it changes in one place and propagates correctly across every page that references it. No template surgery, no risk of partial updates, no fragmented output.

That separation is the architecture that should have existed from day one. Building it correctly after the fact is more expensive than building it correctly first, but it meant every subsequent improvement to WireRef like new wire gauges, updated state code profiles, new page types and could be added without touching the display layer at all.

WireRef also launched with build failures that had nothing to do with data accuracy: 17 orphan pages, broken navigation, no sitemap, no robots.txt. Those went into the preflight checklist. They don’t ship anymore because the checklist won’t let them.

The failures that had nothing to do with AI

Three of the items on the ranked list above aren’t data problems. They’re build and launch failures, and they’re worth separating because the cause is different and so is the fix.

Search features shipped broken. Twice. On-site search is the first thing a user reaches for when they can’t find something from navigation. On two separate launches I shipped it in a broken state, caught it after the fact, and fixed it reactively. The failure isn’t technical complexity, it’s that I treated feature verification as something to do after launch rather than before. Search is now a required checklist item that gets confirmed working on staging before anything goes live. Not because it’s hard to check, but because I’ve now proven I won’t check it unless it’s required.

Mobile usability wasn’t verified before launch. Desktop looked fine. I assumed that was enough. On reference sites where a significant portion of the audience is on a job site or in a field environment pulling up a spec from their phone, that assumption is wrong in a way that matters. A welder checking electrode amperage mid-job isn’t at a desk. An electrician looking up ampacity at a panel isn’t either. The use case is mobile-first, whether the builder thought about it or not. Every new template and every new interactive feature now gets verified on mobile before it ships. This isn’t a design standard. It’s a use case requirement I should have internalized from the first build.

Data was hardcoded into templates. This one compounded every other mistake. When a data error surfaced like fabricated values, wrong specs, incomplete reference, etc, fixing it meant touching the templates themselves, not just the data. The presentation logic and the data it was rendering lived in the same place, which meant a correction to one value carried the risk of breaking how every related value displayed. At scale, that’s not a maintenance problem. It’s a trap. The correct architecture is a clean separation: data lives in JSON files or structured sources that can be corrected, extended, and updated independently, and templates read from those sources without carrying values themselves. When that separation exists, a data fix is one change in one file that propagates correctly everywhere. When it doesn’t, a data fix is a surgical operation on a template that was never designed to be edited that way. Every build now starts with that separation defined before any page generation begins. It’s not retrofitted after something breaks. It’s the starting constraint.

The common thread across all three: I was optimizing for getting something live. These are the mistakes that happen when launch is the goal instead of a milestone.

The pattern underneath all of it

The failures above are different technical problems with the same underlying cause. AI produces output that looks correct at the surface level, and surface-level inspection passes it. Catching what’s wrong requires knowing what the authoritative version looks like. Not in water chemistry or welding metallurgy per say, but in how search systems evaluate trustworthiness for that type of content.

That’s the job. Not generating pages. Not hitting a URL count. Defining what makes content credible in a given space before any page is built, then holding the output to that standard.

The sites exist because AI made the execution faster. They’re accurate because that standard came first. And they’ll keep compounding because every failure now becomes a constraint that ships with the next build.

Case studies for each site

The full architecture, data sourcing decisions, and build approach for each project is documented.

1,000+ city pages built from EPA, EWG, and UCMR5 data. Programmatic architecture, YMYL sourcing standards, 4-layer content structure.

Read case study →NEC electrical reference with 6 page types, 50-state code coverage, and a data architecture rebuilt around the full derating chain.

Read case study →Welding electrode specs and hydraulic fitting data sourced directly from AWS A5.1, A5.18, and SAE J514. Primary source attribution on every value.

Read case study →The three-layer system behind all of these projects: thinking, execution, and memory. How strategy, build pipelines, and accumulated constraints work together.

See the system →Cheers to the next expensive lesson learned!

The systems behind these sites, like how pages are structured, how data connects to templates, and how AI output gets evaluated before it ships, are part of my broader work in content strategy and architecture. The failures above are also a case study in how modern search evaluates trustworthiness: sourcing, information gain, and whether your content holds up to the standard an AI system applies when deciding what to cite. The structural problems at launch — orphan pages, broken sitemaps, missing robots.txt — are technical SEO fundamentals that a preflight checklist handles. All three are connected. That’s the system.

Join the conversation!